Using AI in Law Firm Performance Reviews: How to Sharpen Feedback Without Replacing Human Judgment

As artificial intelligence becomes an integral part of legal workflows, law firms are increasingly exploring other areas where AI can increase efficiencies like in performance development. Performance evaluations produce a significant volume of data in law firms, yet turning that data into meaningful insight often takes weeks of manual effort. At the same time, Professional Development teams must chase down reviewers, improve the clarity and quality of written evaluations, and consolidate information from systems that rarely connect seamlessly like spreadsheets and their HRIS. As AI tools enter the legal workplace, many firms are asking whether technology can help solve these challenges.

The promise is appealing; AI can streamline administrative work, synthesize large amounts of feedback, and improve consistency across reviewers. But successful use of AI in performance management is not primarily a technology challenge—it is a human one. Used thoughtfully, AI can strengthen the review process by supporting, not replacing, human perspective. Used carelessly, it can undermine trust, introduce bias, and erode the very purpose of feedback.

The most effective use of AI in performance reviews follows a simple principle: technology should enhance the human perspective, not substitute for it.

AI as a Complement, Not a Replacement

Performance reviews in law firms are all about human interactions. They shape careers, influence compensation, and reflect mentorship. They are built on lived experiences, and AI should not replace that.

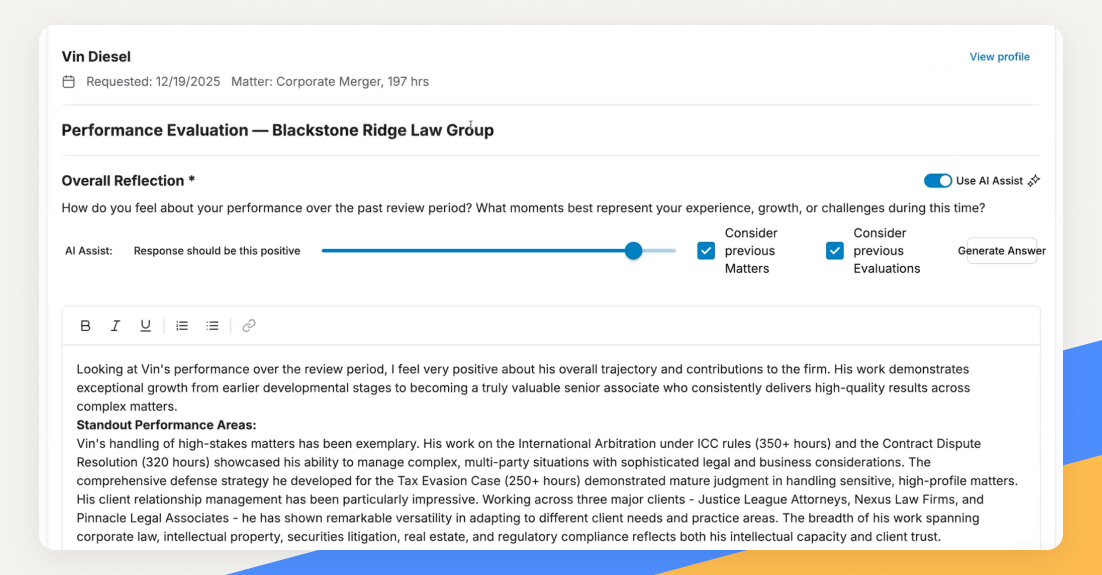

Instead, AI can assist reviewers in articulating clearer, more actionable feedback while leaving the underlying judgment entirely in human hands. For example, AI can help reviewers turn vague feedback like “needs to communicate better” into more actionable observations such as “should provide earlier updates to supervising partners when deadlines shift.” It can ensure that core competencies are addressed, or help to organize fragmented notes into a structured narrative.

In this way, AI enhances the quality of the review without replacing the reviewer’s insight. Real people should power final evaluations, conclusions, and decisions.

Strengthening the Review Framework

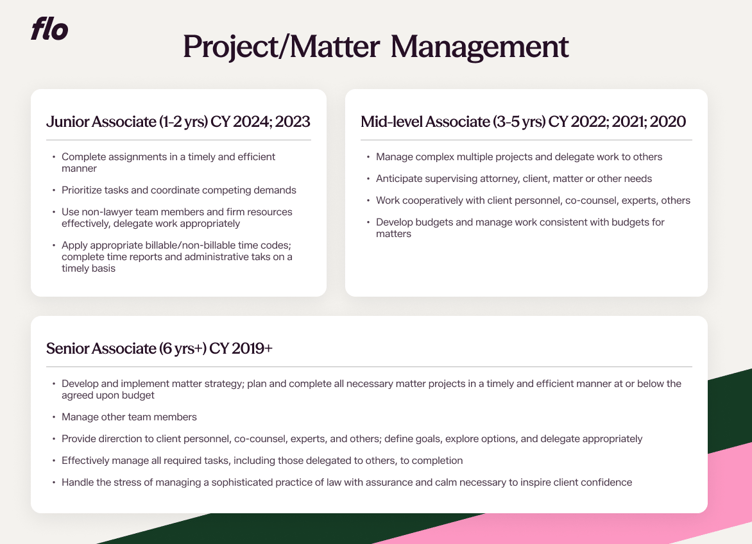

One of the most valuable ways AI can support Professional Development teams occurs before reviews are even written. AI can assist Professional Development teams before reviews even begin by improving the structure of the evaluation framework. Strong questions drive strong feedback.

This helps create competency alignment, question clarity and template consistency, thus reducing ambiguity.

Similarly, AI can help check for consistent scoring standards across evaluations. If one partner regularly rates associates more harshly than others, or if scoring language varies significantly, those discrepancies can be flagged for human review.

The impact of consistent and fair performance evaluations not only impacts compensation, but overall satisfaction and talent retention. With as many as 30-50% of new hires leaving within their first few years, losing top talent due to lack of development insights can have major economic impact.

Improving the Outcomes

Tone matters in performance reviews. Even when evaluation frameworks are strong, the quality of feedback still depends heavily on how reviewers communicate. The same observation can be received as constructive guidance or personal criticism depending on how it is framed. AI tools can assist reviewers by flagging language that may come across as overly vague, emotionally charged, or potentially biased. It can suggest clearer, more objective phrasing and encourage specificity.

However, because AI models are trained on historical data, they may themselves reflect embedded biases. Outputs must always be reviewed carefully to ensure fairness, inclusion, and equity.

AI can also support consistency by analyzing completed reviews, synthesizing themes across multiple reviewers, and identifying common strengths or recurring development areas. This can make consensus discussions more efficient while preserving the substance of the original comments. According to the 2025 “Performance Evaluations – Measuring What Matters: Evaluation Practices in Leading Law Firms” NALP Foundation report, roughly two-thirds of firms reported their Professional Development department was responsible for compiling feedback from multiple sources and drafting summaries. More than 18% report that they are already using AI for performance summaries, signaling enormous potential for efficiency gains. Since then, many more teams have jumped on the AI bandwagon. Collecting and summarizing insights can take weeks, but AI assistance can accelerate the process while ensuring uniformity across groups.

This type of synthesis does not override individual observations, but should help surface patterns that might otherwise be missed and help guard against recency bias.

Guardrails for Responsible Use

Responsible use of AI in performance reviews requires several guardrails:

1. Balanced Inputs: Effective AI-supported reviews combine quantitative data with meaningful human insight, ensuring that metrics such as billable hours or assignment counts are complemented by perspectives on collaboration, judgment, client interaction, and professional growth.

2. Human Conversations at the Center: The strongest review processes center on direct conversations between attorneys and associates, with written evaluations and supporting tools helping to capture and reinforce those discussions rather than replace them.

3. Human Judgment for Key Decisions: Decisions about compensation, promotion, and rankings remain grounded in professional judgment, informed by the context, experience, and discretion that firm leaders bring to evaluating an attorney’s full contribution.

4. Transparency Around AI’s Role: Responsible use of AI includes clear communication about how the technology contributes to the review process, giving attorneys and reviewers visibility into its role while maintaining confidence in the evaluation framework.

5. Protect Confidential Data: Strong AI practices prioritize safeguarding sensitive and identifying employee information, ensuring confidential data is not shared with open-source language models and that AI tools align with firm data protection, security, and privacy standards.

Supporting Reviewers in Real Time

One of the most practical uses of AI in performance reviews occurs while feedback is being written. As a partner drafts feedback, AI can suggest ways to clarify language, reduce repetition, or ensure that comments align with stated competencies. It can also encourage more specificity by prompting for examples.

This kind of augmentation improves the overall quality of reviews while keeping the human reviewer fully in control. When implemented correctly, AI reduces friction for reviewers and saves time for PD teams. It can accelerate consensus-building, strengthen documentation, and enhance clarity, without diminishing the human insight that makes feedback meaningful.

The Bottom Line

AI is not a substitute for leadership, mentorship, or human judgment. It cannot replicate the experience of a partner training a junior associate or the nuance of evaluating professional growth,but it can make that experience and judgment clearer, rigorous, and consistent.

How is your law firm implementing AI intentionally in Professional Development? To continue the conversation and learn more about how Flo supports modern performance reviews for law firms, connect with our team.